Research Illusions: The Magic Behind Poor-Quality Science

The Hidden Trap Doors and Sleight-of-Hand in Scientific Research

In a magic trick, the audience believes they've seen everything clearly—every action, every prop, every movement of the magician's hands. Yet the magician, through subtlety, sleight-of-hand, and misdirection, skillfully conceals crucial parts of the process. The audience marvels at what seems astonishing, extraordinary, or seemingly impossible.

Similarly, in poor-quality research, everything on the surface can appear transparent: the methods section seems thorough, the results look robust, the conclusions seem logically derived. Yet beneath this veneer, the researchers—like skilled magicians—have carefully obscured crucial steps or directed attention away from questionable research practices.

This newsletter is where we stop applauding and start investigating. If the science seems too clean, too perfect, or too dazzling, I want to know what’s hidden in the false bottom of the box. At Beyond the Abstract, the goal isn’t just to read the final conclusion—it’s to figure out what’s behind the curtain. Because in science, just like in magic, the real story often lies in what you don’t see.

The “Magic” Acts in Science

Just as magic tricks can range from simple sleight-of-hand—like a parent pulling a quarter from behind a child’s ear—to elaborate illusions, such as David Copperfield making the Statue of Liberty disappear, the severity and implications of research integrity issues vary widely. Whether a researcher excludes a few non-significant findings or outright creates fake data, they’re still “magic” tricks and undermine our ability to make evidence-based decisions. (And if you want to know how Copperfield did it, here’s an explainer!)

Like good magicians, unscrupulous researchers rely on intentional techniques to convince their audience that results are authentic. Here are the common 'magic acts' used to conceal flawed research.

Palming the Data (Selective Reporting):

Just as a magician subtly hides a coin in the palm, researchers might quietly exclude inconvenient data or outliers that don’t fit their hypothesis. The audience (readers, reviewers, funders) sees only the final, seemingly impressive, data set—not the concealed, problematic evidence. When we see only the supportive evidence, and none of the evidence that refutes it, we reach distorted conclusions.Misdirection and Duplicate Props (Outcome Switching):

Magicians frequently distract the audience from crucial moments when they discreetly swap props or performers—using duplicate items or identical-looking body doubles—to ensure their illusion works perfectly. Similarly, researchers may subtly shift their outcomes or focus attention away from primary endpoints, emphasizing secondary outcomes or subgroup analyses to create the appearance of meaningful results. Just as the audience never sees the hidden duplicate prop or double, readers rarely notice that the initial study objectives have been quietly replaced or overshadowed by more impressive, carefully chosen findings.Forced Choice (P-hacking):

In magic, an audience volunteer believes they've freely chosen a card or object at random, yet the magician predetermined the result by “forcing” their choice. Researchers engage in a similar technique called “p-hacking,” secretly conducting multiple analyses but only reporting those that yield statistically significant results. Although readers perceive the data as impartially analyzed, the outcome was effectively guaranteed from the start by repeated, selective testing—mirroring how a magician ensures a volunteer’s “free” choice leads precisely to the desired conclusion.

The Hidden Assistant (Conflict of Interest):

Magicians often rely on hidden accomplices behind the curtain. In research, undisclosed conflicts of interest—funding sources or relationships that subtly influence the study design or interpretation—can silently steer the results. Likewise, overly friendly editors and peer-reviewers can sometimes serve as secret assistants to make it through the peer-review process. The researcher, like a magician, never mentions the behind-the-scenes help.The Rigged Demonstration (False Transparency):

Magicians often go to great lengths to convince the audience that everything is legitimate—spinning a box to show it’s solid or inviting audience members to inspect a deck of cards. Similarly, researchers may present meticulously detailed methods sections, creating an illusion of complete transparency. However, just as the magician’s transparent gestures distract from hidden manipulations, researchers may subtly omit key methodological details, embellish steps, or gloss over limitations, leaving critical weaknesses unnoticed.

Not All “Magic” is an Illusion: Recognizing Real Feats

While we’ve focused on how magicians use deception, it’s important to remember: not all “magic” performances rely on trickery. Some are real feats—remarkable achievements born of extreme training, discipline, and endurance.

David Blaine famously held his breath underwater for over 17 minutes—a genuine accomplishment verified by independent observers, scientists, and Guinness World Records. Unlike typical illusions, Blaine’s breath-holding feat involved no hidden compartments, secret tubes, or illusions; it relied purely on rigorous preparation and a series of failed attempts along the way. (As an aside, the current world record for breath holding is astonishing—over 24 minutes!)

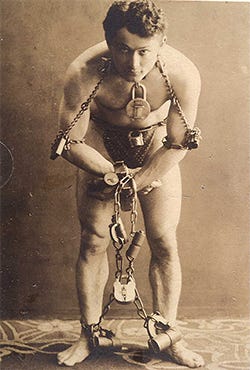

Similarly, Houdini, renowned for his escape illusions, also performed feats of extraordinary strength and physical resilience that were genuinely impressive—escaping real restraints through skill and practiced technique rather than mere deception or hidden aids.

Magical Metaphors

Think of these magic metaphors as a primer for future posts, where we’ll dive into real-world examples of research illusions in action. By unpacking these cases, we’ll sharpen our ability to spot flawed science—and to recognize when astonishing results are the real thing.

Just as an untrained audience can't always spot the sleight-of-hand, even peer reviewers can miss subtle manipulations hidden in published studies—especially without strong methods expertise or a healthy dose of skepticism.

Take the case of Alzheimer’s research: it took over 15 years before serious doubts emerged about a landmark study—one that helped define the field—after evidence surfaced of image manipulation and potentially fabricated data. A foundational pillar turned out to be built on smoke and mirrors.

Magicians entertain us by cleverly manipulating our perceptions and distorting reality; however, scientists are supposed to do the opposite. Spotting these “magic acts” in research isn’t just academic—it’s essential for protecting public trust, sound policy, and scientific progress.

Research Integrity Work: Separating Illusion from Reality

Ultimately, our goal in research integrity is to distinguish real scientific achievement from deceptive illusions. Our task isn't merely about identifying tricks—it's about systematically dissecting exactly how these illusions were constructed. We scrutinize methods closely, detecting whether data have mysteriously appeared or disappeared, analyses have been quietly manipulated, or if described protocols truly match what researchers did in practice.

But, equally important—and often more difficult—is recognizing legitimate science that appears astonishing yet withstands careful scrutiny. Discoveries such as the Higgs boson, gravitational waves, or CRISPR gene-editing technology may initially seem magical or counterintuitive, but they've been repeatedly validated through rigorous independent replication.

This exploration of research integrity and deception isn't about undermining science; rather, it's about strengthening trust by exposing illusions and affirming real scientific progress. Stay tuned as we pull back the curtain further, ensuring the "magic" stays on stage—and out of the scientific literature.

Stay Tuned!

At Beyond the Abstract, we’ll explore more than just research integrity. We’ll dive into the nuanced gray areas where data doesn’t lie—but can still mislead.

We’ll examine the culture of research and academia itself—how incentives, ego, peer review, and career pressures shape what gets published and what gets ignored. And yes, we’ll also offer academic career advice, because navigating this world requires more than just good science—it takes strategy and self-awareness.

These threads may seem separate, but they’re deeply interwoven. Understanding how science works means understanding the system that produces it.

If this resonates, please consider subscribing, sharing with a friend/colleague, and providing your own experiences with science’s 'magic tricks' in the comments.

This is such an important distinction—calling out deception strengthens science, not weakens it. And the real challenge isn’t just spotting fraud—it’s knowing when something that seems unbelievable actually holds up under pressure. Looking forward to what’s next.

If you torture the data long enough it will confess to anything.